Insights on Agentic Intelligence, Systems Design & Applied AI

Mitochondria has joined the Sarvam Startup Program

Our Indian entity has been accepted into the Sarvam Startup Program, a cohort of teams building on Sarvam's stack of speech, language and translation models for Indian languages. A short note on what this means for us, and for the voice work we have been doing across financial services, manufacturing, agriculture, public transport, research, and cross-border trade.

The Purchase Conversation: How Communication Science Reshapes eCommerce

Petty and Cacioppo's Elaboration Likelihood Model describes two processing routes that operate during most purchase decisions. Central processing assesses the product's merits. Peripheral processing responds to how the information is presented, by whom, and whether the interaction seems trustworthy. A system that addresses both routes is producing significantly more valuable results than one that handles only the first. This is the design space that Cornea, our commerce intelligence product, occupies.

What Happens When a Manufacturing Business Automates Its First Workflow

Quote generation in manufacturing is seldom anyone's main role. It is handled by managers, salespeople, and engineers alongside their core duties, which results in response times varying from hours to days, some quotes never being sent, and no tracking in place. We introduced an agentic system into this process. Within the first month, the lead-to-follow-up cycle reduced from about three days to just five minutes. What happened afterwards was more intriguing than the speed improvement alone.

The Quiet Shift in Manufacturing Intelligence

The typical response to knowledge dependency in manufacturing is documentation. This method has been tried for decades and often falls short. The reason lies in its structure: documentation is a separate task from the work itself. An engineer who has just finished a complex assessment is immediately faced with the next enquiry. Choosing between documenting and starting the next task is never a true choice. Any solution that adds extra effort will face the same limitation.

Step by Step, Ferociously

Most companies default to one or the other. They are either patient and gentle, building carefully but without urgency, or they are aggressive and impatient, shipping fast but accumulating debt in the form of shortcuts that eventually require correction. The combination is rarer and more difficult to sustain. This piece describes how it operates in practice.

When AI Builds What the Organisation Always Needed

The most valuable outcome of an AI deployment is often not the system itself but the structured operational knowledge that the implementation process creates. This perspective examines how that knowledge is built, why it compounds, and what it means for how organisations should evaluate AI investment.

Where Competitive Advantage Lives in an AI-First Company

The technology is commoditised. Foundation models are open-source or accessible through APIs at declining cost. Cloud infrastructure is available on demand. Agent frameworks are proliferating. Any company with competent engineering can assemble a capable AI system. The question that remains, and the one that determines which companies survive the next five years, is where competitive advantage lives when the underlying technology is no longer a differentiator. Three frameworks, from McKinsey, from venture capital moat analysis, and from AI-first operating model research, converge on the same answer. The advantage is not in the AI. It is in what the AI learns about a specific context, how that learning compounds over time, and how difficult it becomes to replicate once the system is embedded in the operations it serves.

5 Skills That Become More Valuable With Greater AI Adoption

There is a framework circulating from Elevation Capital that identifies five skills that become more valuable with deeper AI adoption. Taste: AI generates, you decide if it is right for your brand. Context synthesis: AI handles tasks, you connect dots across functions. Judgement: AI creates options, you choose the right one. Strategic instinct: AI optimises, you question whether it is the right problem. Trust building: AI writes your messages, you build relationships. The framework is appealing because it offers reassurance. Humans are not displaced by AI. They are elevated by it. The reassurance is warranted, but the framework is incomplete. It describes the division of labour between human and machine. It does not describe how that division is operationalised inside an organisation. And the operationalisation is where most deployments succeed or fail.

How Persuasive Communication Enables and Accelerates Enterprise AI Deployments

There is a technique in applied persuasion that works reliably across industries, cultures, and seniority levels. You do not describe the prospect's problem. You describe a pattern. You make it neutral, common, non-accusatory. Knowledge living in people rather than systems. Decisions made with partial context. Automation existing, but intelligence fragmented. Then you ask which of these feels familiar. What happens next is the important part: they start supplying the intelligence themselves. They tell you where it hurts, how it developed, who is affected, and what they have already tried. The conversation has shifted from persuasion to recognition. And that shift determines whether the deployment that follows will succeed or stall.

Enterprise AI in the EU-India Corridor

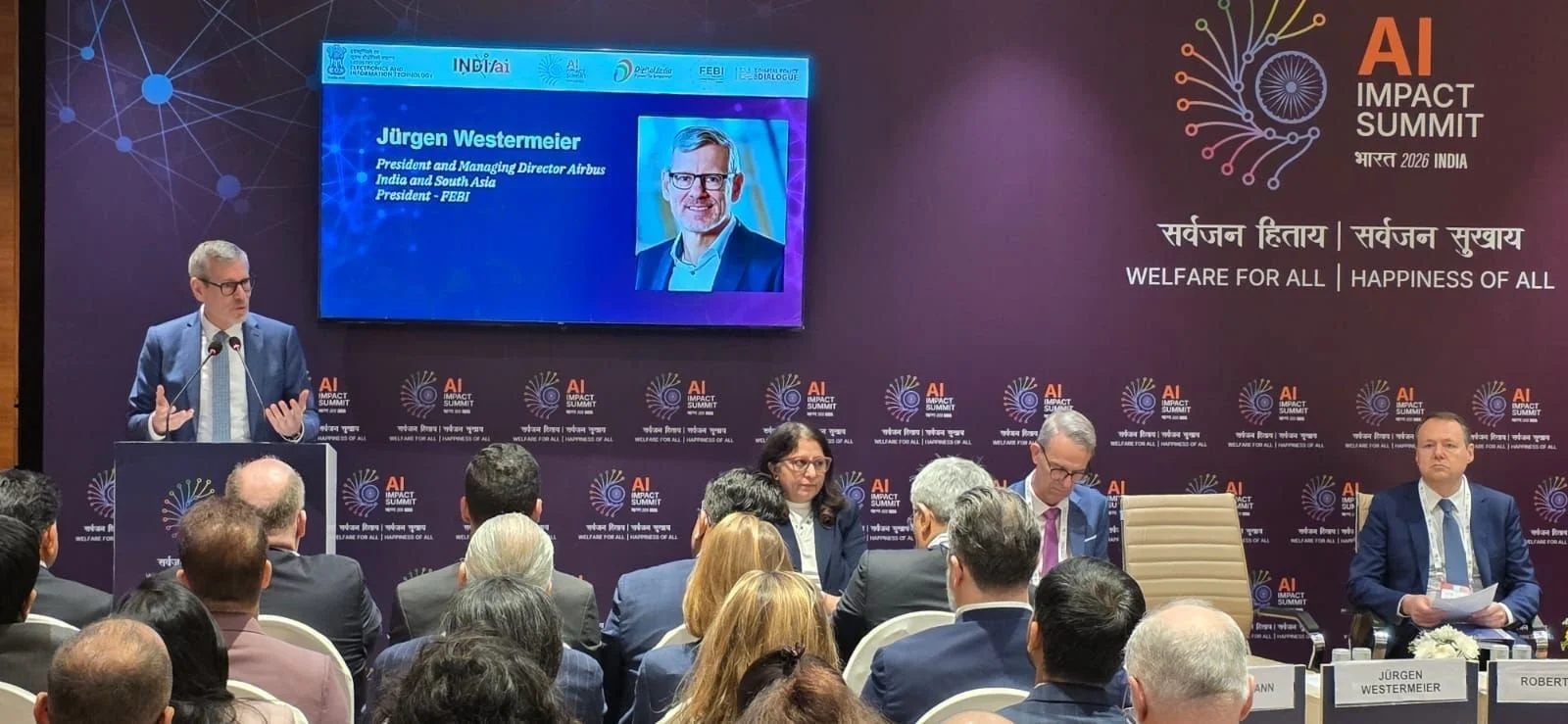

The twin transition is not two parallel initiatives. It is one integrated industrial strategy where digital transformation and sustainability are mutually dependent. At the India AI Impact Summit 2026, a roundtable organised by the Federation of European Business in India brought together the EU Commission, Airbus, Schneider Electric, SAP, Ericsson, and Merck Life Science to examine what this integration requires from AI. The answer was consistent across every panellist: AI embedded in operational design from the outset, governance as architecture rather than afterthought, trust as the precondition for enterprise adoption, and interoperability across the EU-India regulatory landscape. This is the corridor Mitochondria was built for.

Minimal Viable Trust for Agentic AI

Apoorva Goyal of Insight Partners, a firm managing close to $90 billion in assets under management, meets approximately 100 AI companies a month. His assessment of what separates the companies that scale from those that stall is unambiguous: governance is not a compliance function bolted onto the product after traction. It is the product. Enterprises today lead procurement conversations with questions about auditability, traceability, data handling, and kill switches before they discuss capability. The costs of agentic AI going wrong are high enough that organisations will spend millions ensuring governance is in place before signing a contract worth half that. This inversion, where trust precedes capability in the buying decision, is the defining dynamic of the agentic AI market.

The Evidence Gap in Every AI Deployment Decision

Yanagizawa-Drott presented a scenario to the audience involving district hospitals in Uttar Pradesh. He explained that AI can either automate diagnosis or augment the doctor's decision-making. 90% preferred augmentation. He then shared evidence from a randomised experiment in Ghana, where full automation increased hiring success by 70%, while the augmented approach performed worst. The key question is not which method is better overall, but what evidence supports your choice in your particular context.

From Smart Ports to Thinking Ports

India's port infrastructure handles 95% of the country's trade volume and 70% by value. The physical capacity exists. What does not yet exist, at most ports, is the intelligence layer that would connect fragmented systems, standardise processes across stakeholders, and enable the shift from reactive operations to anticipatory decision-making. The distinction between a smart port and a thinking port, articulated at the India AI Impact Summit 2026, captures the challenge precisely. Smart ports have technology. Thinking ports have judgement. The distance between the two is architectural.

What Infrastructure Teams Already Know About Scaling AI

The moderator asked the room to raise their hands. Compute, networking, data pipelines, security, or organisational operating model: which is the biggest barrier to scaling AI? The infrastructure professionals, the people who spend their days building networks and securing systems, pointed to organisation and operating model. The people closest to the technology understand something that the broader AI conversation has been slow to absorb. The machinery works. The question is whether the organisation around it is designed to let it.

93% Confidence, 9% Architecture: The Real Barrier to Industrial AI

The confidence is there. Ninety-three percent of CXOs surveyed believe they will see positive returns on AI investments within one to three years. The ambition is there. Indian organisations expect AI-supported business processes to nearly double, from 23% to 41%, within two years. What remains absent is the architecture to deliver on either. Only nine percent of organisations are approaching AI holistically. The rest are running pilots, accumulating enthusiasm, and waiting for something to bridge the distance between demonstration and production. That bridge is architectural, and building it requires a fundamentally different approach to how AI enters an organisation.

From Principles to Systems in Agricultural AI

The principles are settled. Inclusive. Governed. Co-designed. Data-sovereign. Open. Every panel at every agricultural technology gathering now recites these commitments with genuine conviction. What remains unsettled is how these principles translate into systems that actually function across the full complexity of a smallholder's operational reality. The gap between principled consensus and operational architecture is where agricultural AI will either fulfil its promise or join a long history of development technologies that worked in demonstrations and dissolved in practice.

Building AI Infrastructure for the Organisations That Need It Most

Agentic AI is designed to undertake comprehensive workflows and exercise judgement within defined boundaries. It does not merely execute predefined tasks according to rigid rules. It navigates complexity, handles exceptions, and makes contextual decisions that previously required human attention. This requires organisational readiness that most organisations lack: data infrastructure, process clarity, technical capability, and leadership prepared for a different relationship between human and artificial intelligence. Organisations that wait until they are ready before deploying AI often wait indefinitely. The work of building data infrastructure, documenting processes, and developing integration capability is not urgent until something demands it. Deployment itself is a forcing function for readiness. We design our deployments to harness this constructively, building readiness through deployment rather than waiting for readiness before deployment begins.

Structuring the Unstructured: How AI Transforms Operational Uncertainty into Market Capability

When an organisation adopts AI to manage a process previously done manually, something occurs before the system is operational. The system cannot function on ambiguity; it needs clear inputs, explicit logic, and defined outputs. This requirement creates a forcing function: to deploy the system, the organisation must articulate what was previously implicit. This structuring task is often seen as implementation overhead. In our experience, it is frequently where the most considerable value is generated.

AI in Premium Real Estate: Enabling Brand Experience at Scale

In premium real estate, the product is not just the property. It is the experience of buying, owning, and living. The challenge is that premium developers generate an extraordinary volume of customer interactions, and when these are handled manually by teams stretched across thousands of concurrent customers, consistency becomes impossible. Most developers have invested in CRM systems, lead platforms, and customer portals. What remains missing is intelligence that connects these systems and acts on the connections. An agentic system does not add another tool to the stack. It provides the intelligence layer that makes existing investments actionable. The premium brand experience becomes infrastructure rather than aspiration, happening consistently because it is designed into systems rather than dependent on individual heroics.

Scaling AI Without Losing the Human: Why Governance-First Deployment Wins

There is an emerging distinction in how people relate to AI systems. Some use AI as a tool, applying it to tasks and accepting its outputs. Others govern AI as a capability, shaping how it operates, monitoring its performance, intervening when it drifts, and continuously improving how it integrates with operations. The difference matters enormously. Using AI captures efficiency gains. Governing AI captures strategic advantage. The organisations that benefit most from AI approach it as a reallocation opportunity rather than a replacement exercise. They ask not "which jobs can we eliminate?" but "how can we redeploy human capability to where it creates most value?" The future of work is not humans versus AI. It is humans amplified by AI, with governance designed in from the start rather than bolted on after problems emerge.